5 min read

ML Foundations

Intelligence require understanding how the world works. We Model the world to make prediction, i.e a Model is something that lets us make prediction.

Our brain is a model, remember Mental Model?

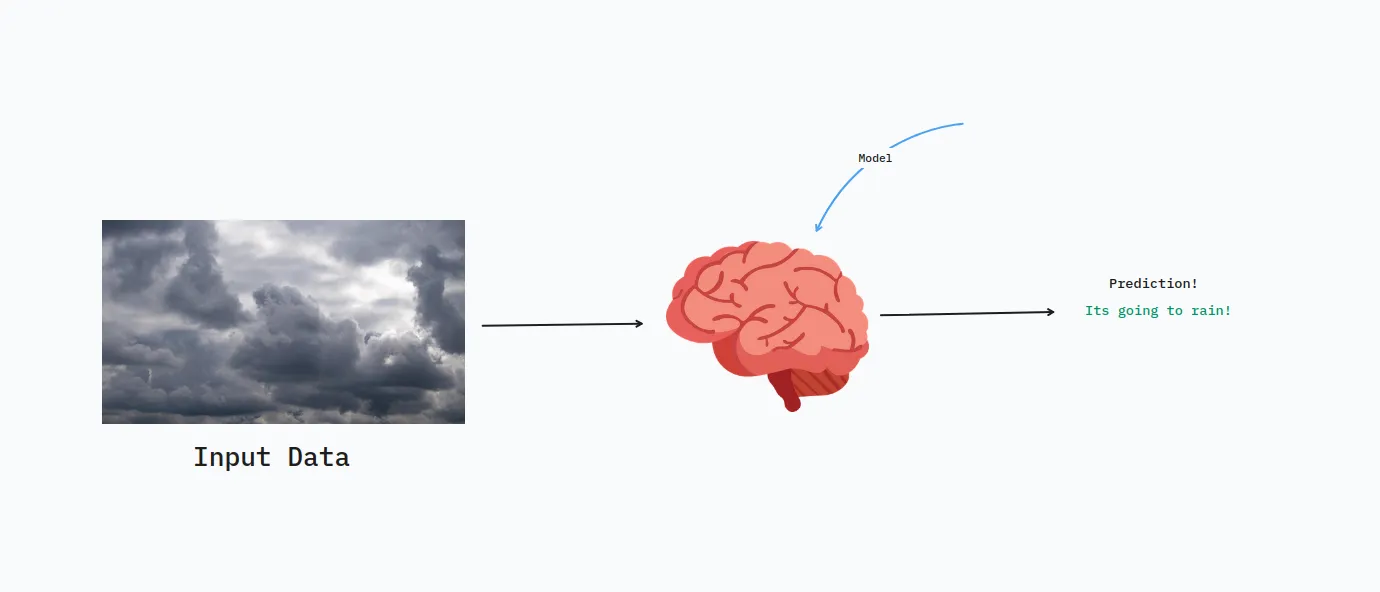

How can we make these models? i.e organisms that can predict something. Turns out Computers are good base for it. We can leverage Computer to predict via making Software Models.

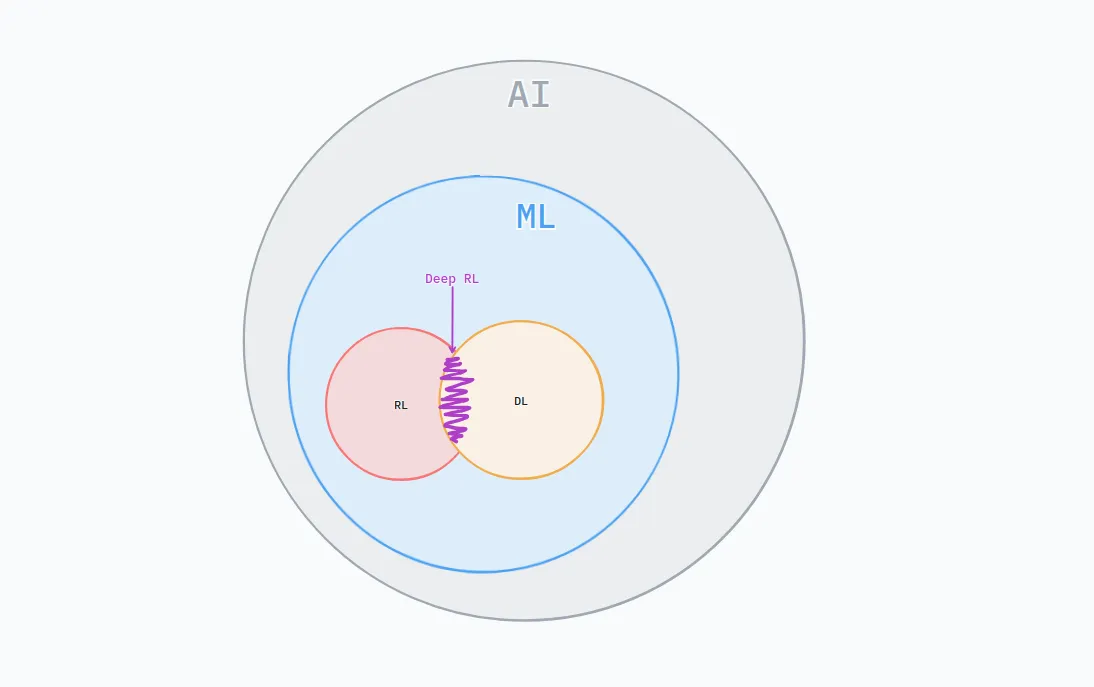

3 Difference Ways Computers can Learn:

- Machine Learning

- Deep Learning

- Reinforcement Learning

Computer Learning: Computer can do things without explicit instructions.

ML: Machine Learning

Allow computers to learn tasks directly from data.

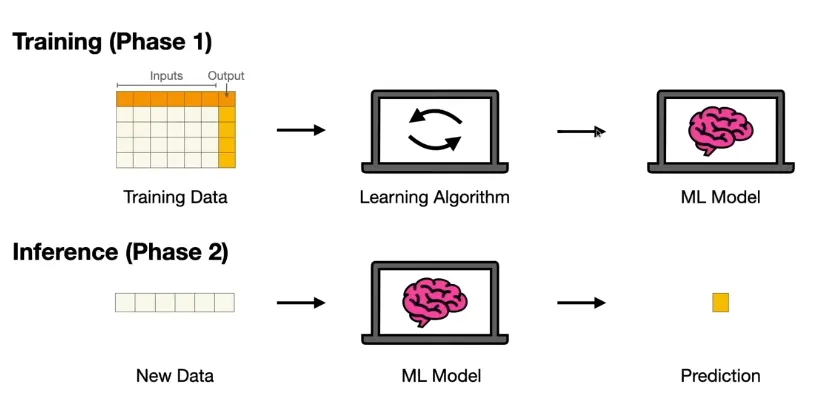

Given

Training Input Data

Training Expected Ouput DataWe need to find parameters, I something think about parameters as knobs (see Perceptron), that will help the model predict the closest values to the expected values

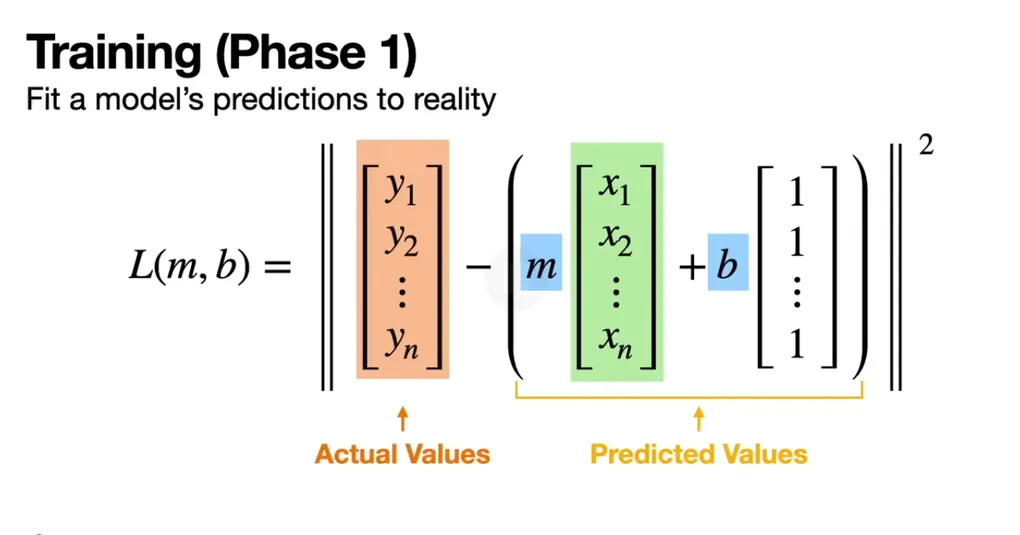

Loss = Expected Value - Predictoin ValueThe Goal of training phase is to find such knobs that will give lower loss.

Loss value can be negative is prediction value is bigger then expected value, so lets square it.

Loss = (Expected Value - Prediction Value) ^ 2

Loss = (Y - X0) ^ 2The better models fits the Prediction to Reality very well.

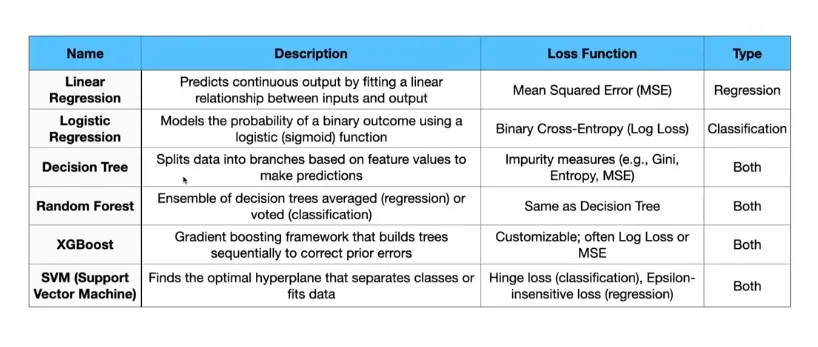

ML Techniques:

- Linear Regression

- Logistics regression

- Decision Tree

- Random Forest

- XGBoost

- SVM (Support Vector Machine)

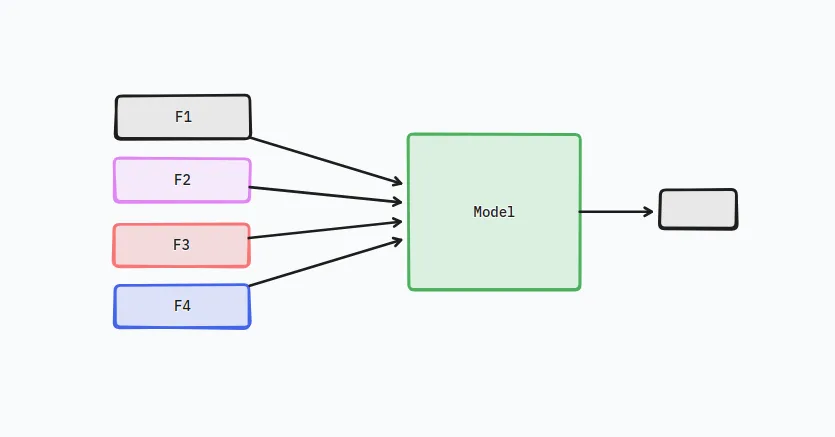

So Machine learning is learning from Data, data points are also called features, i.e if a model takes 4 inputs, then it has 4 features.

For example, in our linear regression simple example given above, our model was:

Y = mX + ci.e it has 1 feature.

What if we have multiple features (inputs) then are we giving equal wattage (weights) to each features, is one feature more important then other?

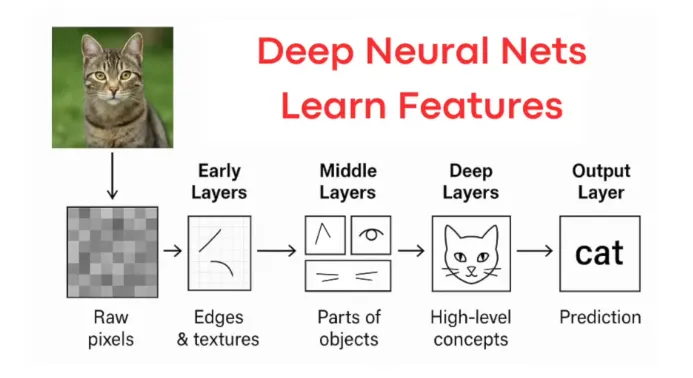

DL: Deep Learning

Neural Networks that learn optimal features on their own.

Remember:

Feature is the data (input information)

Parameters are weights that are learned.

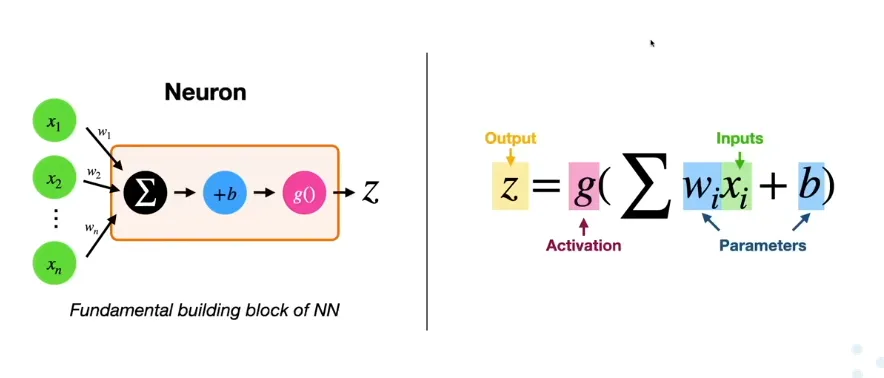

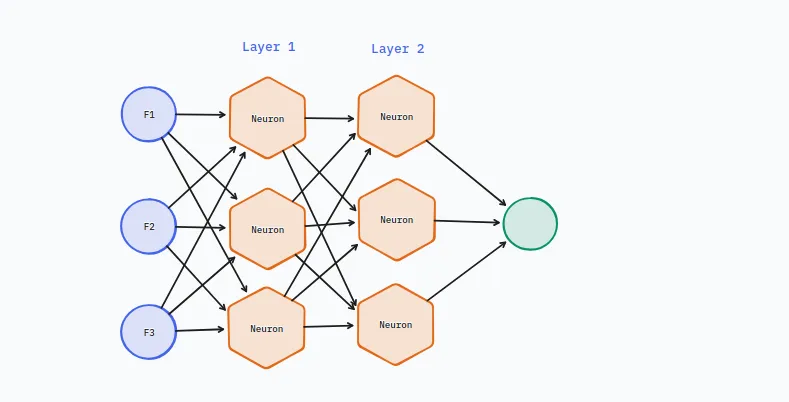

Neural Networks (NN)

In Deep Learning we use Neural Networks to learn optimal features,

which features are more important then others.

A series of operations that can approximate (practically) any function.

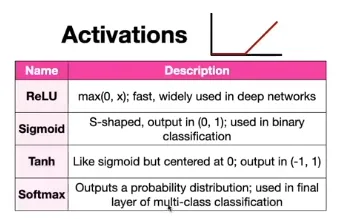

The Sum(wixi) + b is also seen in Linear regression. but the g the Activation function is a non-linear function which gives the neural network the ability to approximate any function.

Activation Function:

Activation Functions is a Decision Maker of a neural network. It sits at the end of a neuron, and decides whether that neural should “fire” (pass information forward) or stay dormant.

This non-linearity of Activation function make the neural network learn complex decision boundary, since it forces the model to become non-linear.

Artificial neural networks are inspired by the human brain. In your brain, a biological neuron receives electrical signals from neighbors. It doesn't just pass every tiny spark along; it waits until the signal is strong enough to cross a threshold, and then it "fires" an action potential.

These types of Activation functions are used in different types of Neural networks:

- Vanilla: Weighted Sum + Activation. FFNNs and CNNs.

- LSTM: Long Short Term Memory, advance neuron with memory and gates for long-term dependencies in sequences.

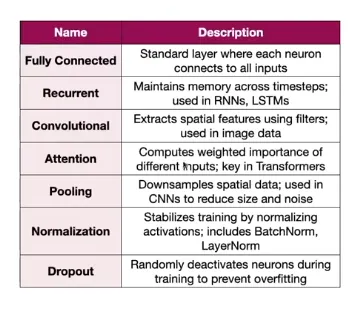

Types of layers structure:

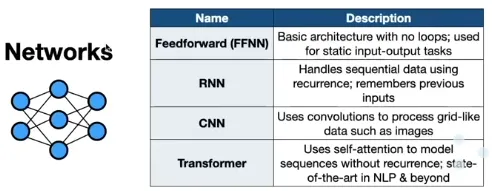

RNNs, CNNs are type of neural networks because they have different type of Layer structure.

Attention is also a type of neural network layer structure.

Types of Networks:

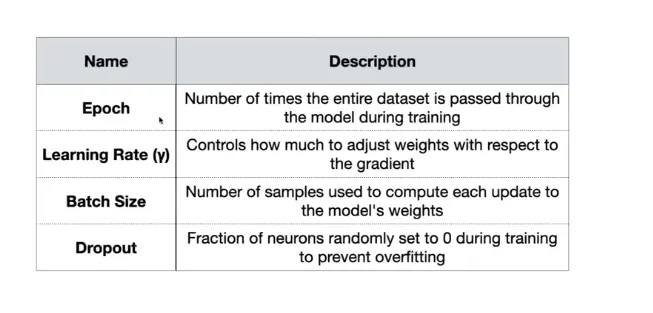

Hyperparameters:

Values that guide the training process

Reinforcement Learning

Computer learn from trail and error, not from data.

References: